Leadership Lessons: AI Agent Frameworks

As AI models (especially LLMs) become more powerful, a critical challenge has emerged: how to effectively connect them to external data sources, software tools, and systems. Pure LLMs are limited by their internal knowledge and lack the ability to perform actions in the real world.

Frameworks like MCP (Model Context Protocol), A2A (Agent-to-Agent), and others provide standardized methods to bridge this gap. They define protocols for servers (which provide tools and data) to communicate with clients (like an AI assistant or agent), enabling the AI to perform tasks such as reading files, querying databases, executing code, and even delegating work to other agents.

The goal is to create a composable, secure, and interoperable ecosystem where AIs can dynamically extend their capabilities beyond the model's built-in knowledge.

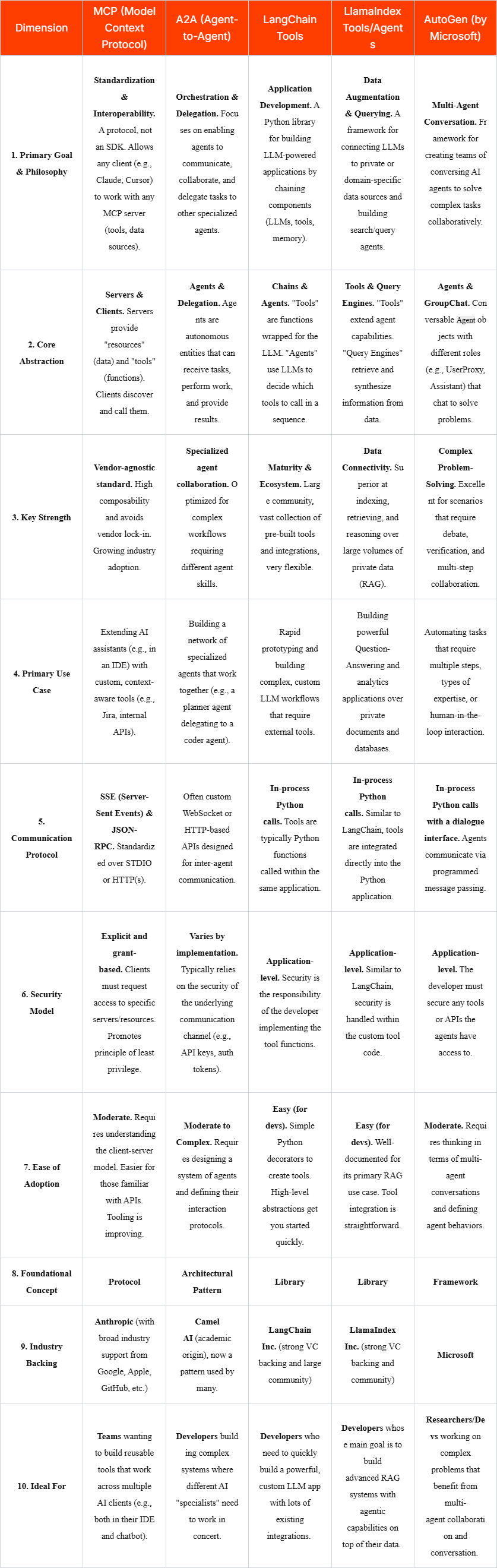

Framework Comparison

Summary and Key Takeaways

MCP (The Standardizer): Think of MCP as the USB standard for AI tools. Its goal is to create a universal plug-and-play ecosystem. You build a tool once (an MCP Server), and it should work anywhere that supports the protocol (an MCP Client like Claude or Cursor). Choose this for future-proof, interoperable tooling.

A2A (The Collaborator): This is an architectural pattern for building a "team" or "society" of AI agents. It’s less about a single standard and more about a design approach where agents delegate tasks to each other. Choose this pattern when your problem is too complex for a single agent and requires specialized sub-agents.

LangChain/LlamaIndex Tools (The Application Builders): These are powerful libraries for building a single, monolithic application that uses an LLM. The tools are directly integrated into your Python code. Choose these for rapid development where the entire application is under your control and you don't need to share tools with external clients.

AutoGen (The Conversationalist): This is a framework for creating teams of agents that talk to each other. It's less about standardizing tool calls and more about structuring the conversation between agents (and humans) to solve problems. Choose this for scenarios that require debate, verification, and multi-step planning.

The landscape is evolving quickly. Notably, frameworks like LangChain and LlamaIndex are actively adding support for MCP, recognizing the value of a standard protocol. This means you could use LangChain to build an agent that leverages tools from both its native ecosystem and any external MCP server, combining the strengths of both approaches.