Brain Teaser: The Scaling Paradox

A startup’s AI system performance follows this pattern:

1-100 users: 99% uptime

101-1000 users: 95% uptime

1001-10000 users: 90% uptime

10001+ users: 85% uptime

If downtime costs $100 per user per hour, and they gain 100 users per week, starting with 50 users, when does the weekly downtime cost first exceed $10,000?

Answer:

The weekly downtime cost first exceeds $10,000 after 1 week (when they reach 150 users and drop to 95% uptime).

The Math:

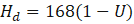

Let the weekly hours be (24×7). If uptime is , then expected downtime hours per week is:

Downtime cost is $100 per user per downtime hour, so weekly downtime cost at N users is:

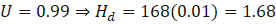

Week 0 (start): 50 users, 99% uptime

C(50)=50 \cdot 1.68 \cdot 100 = 50 \cdot 168 = $8{,}400]

So it’s below $10,000 at the start.

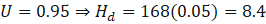

After 1 week: 150 users (gain 100), now in the 101–1000 tier at 95% uptime

C(150)=150 \cdot 8.4 \cdot 100 = 150 \cdot 840 = $126{,}000]

That’s above $10,000.